Tech

Apple snubs Nvidia for AI training kit, chooses Google

Apple has detailed in a research paper how it trained its latest generative AI models using Google’s neural-network accelerators rather than, say, more fashionable Nvidia hardware.

The paper [PDF], titled “Apple Intelligence Foundation Language Models,” provides a deep-ish dive into the inner workings of the iPhone titan’s take on LLMs, from training to inference.

These language models are the neural networks that turn queries and prompts into text and images, and power the so-called Apple Intelligence features being baked into Cupertino’s operating systems. They can perform things like text summarization and suggested wording for messages.

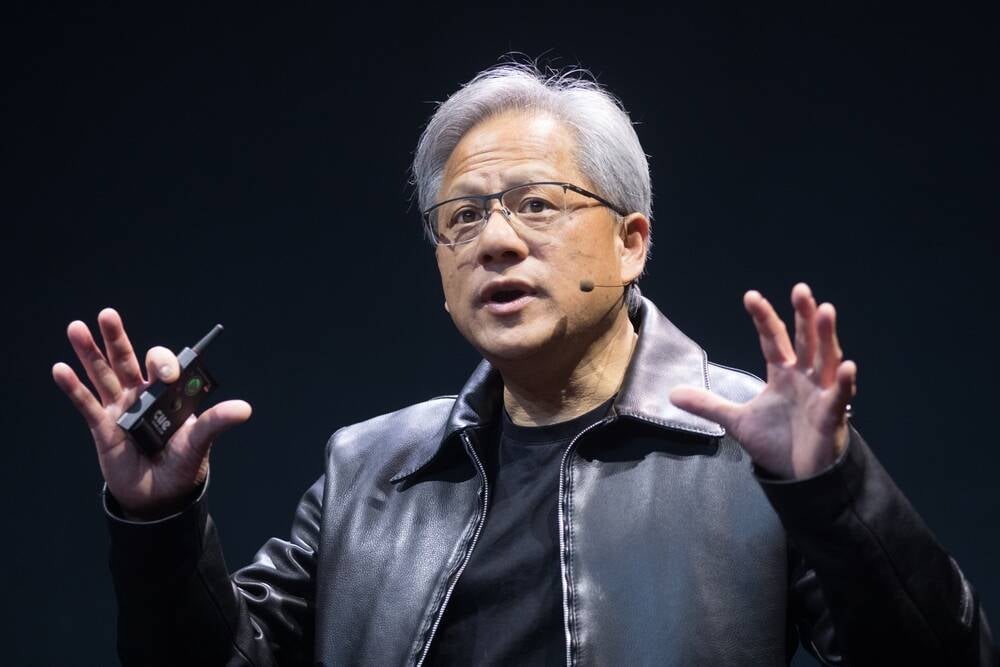

While most AI orgs clamor for Nvidia GPUs, especially the H100 until Blackwell comes along – and may be eyeing up offerings from AMD, Intel, and others – when it comes to training machine learning systems Apple decided to choose Google’s Tensor Processing Unit (TPU) silicon. It’s not entirely surprising, as the Mac titan and Nvidia have been on bad terms for some years now for various reasons, and it seems Cook & Co have little interest in patching things up for the sake of training Apple Foundation Models (AFMs).

What is surprising is that Apple didn’t turn to Radeon GPUs from AMD, which has previously supplied chips for Mac devices. Instead, Apple chose Google and its TPU v4 and TPU v5 processors for developing AFMs on training data.

Yes, this is the same Google that Apple criticized just last week over user privacy in respect to serving online ads. But on the hardware side of things everything appears to be cozy.

Apple’s server-side AI model, AFM-server, was trained on 8,192 TPU v4 chips, while AFM-on-device used 2,048 newer TPU v5 processors. For reference, Nvidia claims training a GPT-4-class AI model requires around 8,000 H100 GPUs, so it would seem in Apple’s experience the TPU v4 is about equivalent, at least in terms of accelerator count.

For Cupertino, it might not just be about avoiding using Nvidia GPUs. Since 2021, Google’s TPUs have seen explosive growth, to the point where only Nvidia and Intel have greater market share according to a study in May.

Users prefer responses from our models, Apple claims

Apple claims its models beat some of those from Meta, OpenAI, Anthropic, and even Google itself. The research paper doesn’t go into much detail about AFM-server’s specifications, though it does make lots of hay about how AFM-on-device has just under three billion parameters and has been optimized to have a quantization of less than four bits on average for the sake of efficiency.

Although AI models can be evaluated with standardized benchmarks, Apple says it “find[s] human evaluation to align better with user experience and provide a better evaluation signal than some academic benchmarks.” To that end, the iMaker presented real people with two different responses for the same prompt from different models, and asked them to choose which one was better.

However, the prompts and responses are not provided, so you’ll just have to trust Apple’s word on it.

While Apple claimed its AFMs are “often preferred over competitor models” by humans grading their outputs, its models actually only seemed to score in second or third place overall. AFM-on-device won more often than it lost against Gemma 7B, Phi 3 Mini, and Mistral 7B, but couldn’t get the win against LLaMa 3 8B. The paper did not include numbers for GPT-4o Mini.

Meanwhile, AFM-server wasn’t really a match for GPT-4 and LLaMa 3 70B. We can probably guess it doesn’t fare too well against GPT-4o and LLaMa 3.1 405B either.

Apple sort-of justifies itself by demonstrating that AFM-on-device outperformed all small models for the summarization tool in Apple Intelligence, despite being the smallest model tested. Though, that’s just one feature, and it’s curious why Apple didn’t show similar data for other tools.

Cupertino also claims a big victory in generating safe content. While AFM-on-device and -server output harmful responses 7.5 percent and 6.3 percent of the time respectively, all other models did so at least ten percent of the time, apparently. Mistral 7N and Mixtral 8x22B were apparently the biggest offenders at 51.3 percent and 47.5 percent each, Apple claimed. ®